Install and running Vagrant is in general pretty straightforward. For a non-Linux user, it can get a little bit tricky when combining Vagrant with the Windows Subsystem for Linux (WSL). Even when you got the installation right, there are still some stumbling blocks waiting for you when you try to run Ansible Playbooks during vagrant up.

Vagrant is really helpful when you want to setup a predefined, always the same environment to run some tests or to develop on. Vagrant uses so called Boxes which can be seen like Docker Images, just as VMs. You can describe them in a Ruby styled way and deploy even a multi-VM environment with a simple configuration file and by running vagrant up.

I presume, that you’ve WSL2 and Virtualbox already running on your system. If not, do so and then come back here. The installation of WSL and Virtualbox is not part of this introduction. You may want to use Hyper-V as your Hypervisor as you’re are already on a Windows machine when you found these words, I tried it out and can clearly not recommend you Hyper-V in combination with Vagrant and WSL. After many hours of trying, I still haven’t got any VM running and accessible with Hyper-V and Vagrant.

Install Vagrant

You need to install Vagrant on your Windows machine as well as in WSL2. You must install the same version of Vagrant in both environments. You can find more information regarding the installation here. Under Windows, you need to download and run the msi package and for WSL2, you can download the Vagrant package (same version as you installed on your Windows client) directly from here. Install the package with the package manager included in your distribution (most likely apt). For Ubuntu distributions, you can also add the Vagrant repository and install Vagrant, using the following lines:

curl -fsSL https://apt.releases.hashicorp.com/gpg | sudo apt-key add -

sudo apt-add-repository "deb [arch=amd64] https://apt.releases.hashicorp.com $(lsb_release -cs) main"

sudo apt-get update && sudo apt-get install vagrantThen, as the final move, you have to put some additional environment variables in you WSLs profiles (e.g. .bashrc or .zshrc, depending on which shell you use).

Attention: Please adjust the path to your user home and the Virtualbox installation to your setup!

# Setup Vagrant

export VAGRANT_WSL_ENABLE_WINDOWS_ACCESS="1"

export VAGRANT_WSL_WINDOWS_ACCESS_USER_HOME_PATH="/mnt/c/Users/username/"

export PATH="$PATH:/mnt/d/Programs/Virtualbox"VAGRANT_WSL_ENABLE_WINDOWS_ACCESS: Enables Vagrant to use Features that are installed on your Windows client and not within WSL. So for instance Virtualbox itself will then get used from the Windows Client installation and not presuming it is installed within your WSL.VAGRANT_WSL_WINDOWS_ACCESS_USER_HOME_PATH: Path to your user home directory from within WSL. Adjust the entry to your configuration (so username).PATH: Add the Virtualbox installation path of Virtualbox to your PATH environment variable.

When running vagrant -v, your output should look something like this:

❯ vagrant -v

Vagrant 2.2.19Using Vagrant

You can find some Vagrantfiles in my Github repository. We will have a look into one which will build single VM and run an Ansible Playbook to the newly build VM. Place the Vagrantfile with the following content.

# -*- mode: ruby -*-

# vi: set ft=ruby :

Vagrant.configure("2") do |config|

# General configuration

config.vm.box = "generic/ubuntu2004"

config.ssh.insert_key = false

config.vm.provider :virtualbox do |v|

v.memory = 512

v.linked_clone = true

end

# VM configuration

config.vm.define "ubuntu2004" do |ubuntu2004|

ubuntu2004.vm.hostname = "ansible"

end

config.vm.provision "ansible" do |ansible|

ansible.playbook = "playbook.yml"

end

endWe will create a (really minimal) Ubuntu VM with this Vagrantfile. The last section is interesting, here we control which Ansible Playbook we want to run after the VM was deployed. Let’s say we want to setup an Apache Webserver using an Ansible Playbook. So create a playbook.yml with the following content alongside your Vagrantfile.

---

- hosts: "all"

become: true

tasks:

- name: Install apache2

apt:

name: apache2

update_cache: true

state: latestWhen now issueing vagrant up, Vagrant will create a VM in Virtualbox, spin it up and run the playbook on it (see Fixing issues down below).

❯ vagrant up

Bringing machine 'ubuntu2004' up with 'virtualbox' provider...

==> ubuntu2004: Cloning VM...

==> ubuntu2004: Matching MAC address for NAT networking...

==> ubuntu2004: Checking if box 'generic/ubuntu2004' version '3.6.8' is up to date...

==> ubuntu2004: Setting the name of the VM: ansible_ubuntu2004_1645361591133_80968

==> ubuntu2004: Fixed port collision for 22 => 2222. Now on port 2200.

==> ubuntu2004: Clearing any previously set network interfaces...

==> ubuntu2004: Preparing network interfaces based on configuration...

ubuntu2004: Adapter 1: nat

==> ubuntu2004: Forwarding ports...

ubuntu2004: 22 (guest) => 2200 (host) (adapter 1)

ubuntu2004: 22 (guest) => 2200 (host) (adapter 1)

==> ubuntu2004: Running 'pre-boot' VM customizations...

==> ubuntu2004: Booting VM...

==> ubuntu2004: Waiting for machine to boot. This may take a few minutes...

ubuntu2004: SSH address: 172.22.32.1:2200

ubuntu2004: SSH username: vagrant

ubuntu2004: SSH auth method: private key

ubuntu2004: Warning: Connection reset. Retrying...

ubuntu2004:

ubuntu2004: Vagrant insecure key detected. Vagrant will automatically replace

ubuntu2004: this with a newly generated keypair for better security.

ubuntu2004:

ubuntu2004: Inserting generated public key within guest...

ubuntu2004: Removing insecure key from the guest if it's present...

ubuntu2004: Key inserted! Disconnecting and reconnecting using new SSH key...

==> ubuntu2004: Machine booted and ready!

==> ubuntu2004: Checking for guest additions in VM...

==> ubuntu2004: Setting hostname...

==> ubuntu2004: Running provisioner: ansible...

ubuntu2004: Running ansible-playbook...

PLAY [all] *********************************************************************

TASK [Gathering Facts] *********************************************************

ok: [ubuntu2004]

TASK [Install apache2] *********************************************************

changed: [ubuntu2004]

PLAY RECAP *********************************************************************

ubuntu2004 : ok=2 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0Fixing issues

When running Vagrant within WSL, I ran into mainly two problems.

- Getting a SSH

connection refusederror when doingvagrant up. The problem and the solution is described in detail here. But in short words here again. When starting a Virtualbox VM with NAT configured, Vagrant will add a Port forward of the SSH port22to2222on yourlocalhostaddress (127.0.0.1). WSL implemented another Host Network for accessing your Windows client from within WSL (e.g. some172.x.x.xaddress). Vagrant does not know about this and therefor tries to access127.0.0.1:2222.

The solution seems simple. By installing the Vagrant plugin virtualbox_WSL2 with the following command:

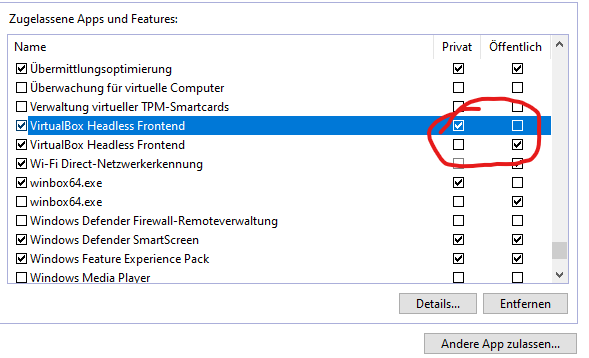

vagrant plugin install virtualbox_WSL2This changed the error for me, but does not fix it entirely. What was missing was a Windows Firewall rule, that enables the access from WSL to the Virtualbox forwarded Port 2222. When running vagrant up after you’ve installed the plugin, a Windows Firewall popup will be shown. Ensure, that you’ve selected both private and public networks for the access rule. If you have missed that, you have to add the public network to the firewall rule later.

- An SSH connection from WSL to your Vagrant build VM will fail during publickey authentication with an error like

WARNING: UNPROTECTED PRIVATE KEY FILE!. You can find more details on the error here.

When running the playbook.yml with the Vagrant Ansible provider, you will most likely run into this error. I solved it by adding the following lines to my /etc/wsl.conf (like described in the Github issue).

# Enable extra metadata options by default

[automount]

enabled = true

root = /mnt/

options = "metadata,umask=77,fmask=11"

mountFsTab = falseAnd restarted WSL from within an elevated Powershell:

Restart-Service -Name "LxssManager"Philip

Thank you, this notes managed to get my WSL working with vagrant once again.

Thanks for your feedback.

Thank you, this helped me too!

Hi @TheDatabaseMe,

First for this technical article about running a Vagrant box other a Virtualbox through WSL.

I still have an issue regarding while following the tutorial. I have dig a little bit deeper to find the issue but it seems like the control socket is not created properly in my .ansible folder…

You can find the following message below:

fatal: [ubuntu2004]: UNREACHABLE! => {"changed": false, "msg": "Failed to connect to the host via ssh: Warning: Permanently added '[172.27.112.1]:2222' (ED25519) to the list of known hosts.\r\nControl socket connect(/mnt/c/users/user/.ansible/cp/597df28d48): Connection refused\r\nFailed to connect to new control master", "unreachable": true}I manage to connect through vagrant ssh and using a proper ssh command: ssh vagrant@172.27.112.1 -p 2222

Do you have any idea about the above issue?

Best regards,

Tom

Hello Tom,

the error you have commented is not coming from Vagrant or SSH itself. It comes from Ansible. So, the question is, which version of Ansible you’re using, is there a specific ansible.cfg, how are the ssh settings within the ansible.cfg.

Especially doublecheck these settings:

[ssh_connection]ssh_args = -C -o ControlMaster=auto -o ControlPersist=60s

Maybe, if you want to share your ansible.cfg (if there is any) and your playbook with me, I can help you more. For now, I just would point you in this new direction. Hope this helps.

Kind Regards

Philip

Well, for me it works with Ubuntu 20.04 based boxes – but “geerlingguy/rockylinux8” and “hashicorp/bionic64” (the only other two I’ve tried so far) throws a “Kernel Panic – not syncing: Fatal Exception” in the box itself – which seems to be due to the Windows Hypervisor.

If you turn Hypervisor off with something like “bcdedit /set hypervisorlaunchtype off” then the box starts up fine but then WSL doesn’t work anymore (similarly Docker Desktop will also fail to launch).

Any idea why “generic/ubuntu2004” seems to play nice with the Windows Hypervisor but the others dont?

Could you share your Vagrantfile here?

Works for me with

rockylinux8.❯ vagrant upBringing machine 'rocky8' up with 'virtualbox' provider...

==> rocky8: Preparing master VM for linked clones...

rocky8: This is a one time operation. Once the master VM is prepared,

rocky8: it will be used as a base for linked clones, making the creation

rocky8: of new VMs take milliseconds on a modern system.

==> rocky8: Importing base box 'geerlingguy/rockylinux8'...

==> rocky8: Cloning VM...

==> rocky8: Matching MAC address for NAT networking...

==> rocky8: Checking if box 'geerlingguy/rockylinux8' version '1.0.0' is up to date...

==> rocky8: Setting the name of the VM: Projekte_rocky8_1666939967176_4740

==> rocky8: Clearing any previously set network interfaces...

==> rocky8: Preparing network interfaces based on configuration...

rocky8: Adapter 1: nat

rocky8: Adapter 2: hostonly

==> rocky8: Forwarding ports...

rocky8: 22 (guest) => 2222 (host) (adapter 1)

rocky8: 22 (guest) => 2222 (host) (adapter 1)

==> rocky8: Running 'pre-boot' VM customizations...

==> rocky8: Booting VM...

==> rocky8: Waiting for machine to boot. This may take a few minutes...

rocky8: SSH address: 172.31.208.1:2222

rocky8: SSH username: vagrant

rocky8: SSH auth method: private key

==> rocky8: Machine booted and ready!

==> rocky8: Checking for guest additions in VM...

==> rocky8: Setting hostname...

==> rocky8: Configuring and enabling network interfaces...

==> rocky8: Mounting shared folders...

rocky8: /vagrant => /mnt/e/Projekte

My environment is the following:

Virtualbox: 6.1.32

Vagrant: 2.3.1

Vagrantfile:

# -*- mode: ruby -*-# vi: set ft=ruby :

Vagrant.configure("2") do |config|

# General configuration

config.vm.box = "geerlingguy/rockylinux8"

config.ssh.insert_key = false

config.vm.provider :virtualbox do |v|

v.memory = 512

v.linked_clone = true

end

# VM configuration

config.vm.define "rocky8" do |rocky8|

rocky8.vm.hostname = "rocky8"

rocky8.vm.network "private_network", ip: "192.168.56.10"

end

end

You haven’t done the update to Virtualbox 7 did you?

Kind regards

Philip

Thanks for replying!

I tried out the other boxes just using the Vagrantfile generated with “vagrant init” – that might have been a bit naive of me. I will try out your Vagrantfile and post here the results.

This is my first foray into vagrant managed vm’s and when I figured out it doesn’t really work with HyperV I found your post and downloaded the latest version of VirtualBox – which is 7.0.2r154219.

And I just had a look at the documentation on Vagrant and saw that 7.x is not listed as supported. Haha. Ok. I guess I should uninstall 7 and install 6.

Yes, Virtualbox 7 is not supported by Vagrant right now. That’s why I was asking. Not sure if this solves your problem, but worth a try.

Philip

Hmm, no it looks like support for Virtual Box 7 was introduced in 2.3.2 – which explains why I didn’t have any problems. https://github.com/hashicorp/vagrant/blob/v2.3.2/CHANGELOG.md

In any case, thanks for taking the time to respond to my comments. I’ll see if I can figure it out…. if I do I’ll post here – might be useful for anyone else encountering similar issues.

Thanks and good luck.

No, unfortunately I still get Kernel Panic in those VMs even with Virtual Box 6 and with your Vagrant File.

I’m on Windows 11 though, and I’m starting to wonder if there isn’t something up with the latest update because I found posts complaining about similar problems with Virtual Box under Win 11.

The solution seems to be to disable the Hypervisor – but then I lose WSL and Docker Desktop. I’ll try VMWare as a provider. Maybe that works.

Right, so here is my working theory: after the Windows v1803 update it became possible to run VirtualBox and VMWare alongisde the HyperVisor manager. In VirtualBox you can specify this in the “Paravirtualization Interface” setting – by default though, it will select Hyper-V in order for it to work when Windows HyperVisor is enabled.

However, using this API implies that boxes not marked compatible with Hyper-V won’t work.

Here’s a listing of all the boxes marked as Hyper-V compatible: https://app.vagrantup.com/boxes/search?utf8=%E2%9C%93&sort=downloads&provider=hyperv&q=

I tested some of those boxes and they don’t enter a Kernel Panic when launched.

And seeing as how VirtualBox is just an interface ontop of HyperV I decided to give using HyperV as the provider a try again. This blog post proved very useful:

https://learn.microsoft.com/en-us/virtualization/community/team-blog/2017/20170706-vagrant-and-hyper-v-tips-and-tricks

@TheDatabaseMe

But given all this, I still don’t understand how you managed to get the rockylinux8 box to work with Vagrant, WSL and VirtualBox. Are you perhaps using WSL1 ?

Nope. WSL2 here. AFAIK this whole stuff will not even possible under WSL1.

Have you tried this? https://superuser.com/questions/1733304/fresh-new-centos-on-oracle-vm-virtualbox-kernel-panic-not-syncing-fatal-exce

I definitely don’t have Core Isolation enabled – but I went ahead and explicitly disabled VBS in the group policy – because why not? But I still have the same problem.

In any case, the disabling of VBS seems to be related to Hyper-V. People that did not explicitly enable Hyper-V would still have it active if they enabled VBS: https://forums.virtualbox.org/viewtopic.php?f=25&t=99390

I believe the problem is due to this fallback “NEM” mechanism VirtualBox employs if you’ve got Hyper-V enabled – looking at the logs produced it gets explicitly mentioned:

“`

00:00:02.073013 HM: HMR3Init: Attempting fall back to NEM: VT-x is not available

00:00:02.096875 NEM: info: Found optional import WinHvPlatform.dll!WHvQueryGpaRangeDirtyBitmap.

00:00:02.096882 NEM: info: Found optional import vid.dll!VidGetHvPartitionId.

00:00:02.096884 NEM: info: Found optional import vid.dll!VidGetPartitionProperty.

00:00:02.096916 NEM: WHvCapabilityCodeHypervisorPresent is TRUE, so this might work…

00:00:02.097019 NEM: Warning! Unknown CPU features: 0x2e1b7bcfe7f7859f

00:00:02.097022 NEM: WHvCapabilityCodeProcessorClFlushSize = 2^8

00:00:02.097024 NEM: Warning! Unknown capability 0x4 returning: 3f 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00…

00:00:02.097027 NEM: Warning! Unknown capability 0x5 returning: 03 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00…

00:00:02.097595 NEM: Created partition 0000019db1402f90.

00:00:02.097601 NEM: Adjusting APIC configuration from X2APIC to APIC max mode. X2APIC is not supported by the WinHvPlatform API!

00:00:02.097602 NEM: Disable Hyper-V if you need X2APIC for your guests!

00:00:02.097649 NEM:

00:00:02.097649 NEM: NEMR3Init: Snail execution mode is active!

00:00:02.097649 NEM: Note! VirtualBox is not able to run at its full potential in this execution mode.

00:00:02.097650 NEM: To see VirtualBox run at max speed you need to disable all Windows features

00:00:02.097650 NEM: making use of Hyper-V. That is a moving target, so google how and carefully

00:00:02.097650 NEM: consider the consequences of disabling these features.

00:00:02.097650 NEM:

00:00:02.097662 CPUM: No hardware-virtualization capability detected

00:00:02.097665 CPUM: fXStateHostMask=0x7; initial: 0x7; host XCR0=0x7

00:00:02.099288 CPUM: Matched host CPU INTEL 0x6/0x97/0x2 Intel_Core7_AlderLake with CPU DB entry ‘Intel Core i7-6700K’ (INTEL 0x6/0x5e/0x3 Intel_Core7_Skylake)

00:00:02.099325 CPUM: MXCSR_MASK=0xffff (host: 0xffff)

“`

I don’t know, it might be due to my CPU architecture (I have a 12900K). Definitely something seems to go awry with the APIC – enabling IO-APIC causes the problem described here: https://forums.virtualbox.org/viewtopic.php?f=6&t=107028

I did notice though that the rockylinux box was using an IDE controller and not SATA (like the Ubuntu box was using) and when I moved the vmdk file to a SATA controller I created, the box would start up at least, and not kernel panic, but then after a while complain about partitions that were missing that it expected.

In any case, in light of these logs, and also the fact that these boxes work when I switch off Hyper-V – it looks to me like it’s got something to do with this compatibility mode VirtualBox defaults to when Hyper-V is enabled.

For now I will just stick to using the Hyper-V compatible boxes.

In any case, thank you for being an excellent individual and responding to my comments!

Ich gehe davon aus dass du Deutscher bist? Hauptsächlich wegen der Benennung deiner Verzeichnisse 😉 Ich selbst bin aus Holland eingewandert, lebe seit zehn Jahren in Hessen.

Alles gute und vielen Dank!

You’re Welcome. Gern geschehen;)

it took several hours to unearth the problem with 127.0.0.1 and then try different solutions. Your article is great! Thank you!

Glad you liked it.

Just start to learn using Ansible with Jeff’s book. Getting Vagrant up was the first obstacle. This article is a life saver for me.

This really helped to setup Vagrant, Virtualbox and Ansible on my station. I already got frustrated^^

Glad you liked it.

Does anyone else encounter this issue? When there is a space in the path, the ‘vagrant up’ command gets stuck, displaying an error message that says it can’t find the VirtualBox path.

Here is the path I’ve tried to set:

export PATH=”$PATH:/mnt/d/Program Files/Oracle/VirtualBox”

for me it is C:\Program Files\Oracle\VirtualBox so ur export path should be export PATH=”$PATH:/mnt/c/Program Files/Oracle/VirtualBox”

Hello – I’m receiving the same error as Tom when performing a “vagrant provision” for the ansible playbook. Please help.

Please note: I’m able to successfully SSH into my vagrant box using: “vagrant ssh”.

ERROR:*************************************************************************

==> ubuntu2004: Running provisioner: ansible...

Vagrant gathered an unknown Ansible version:

and falls back on the compatibility mode '1.8'.

Alternatively, the compatibility mode can be specified in your Vagrantfile:

https://www.vagrantup.com/docs/provisioning/ansible_common.html#compatibility_mode

ubuntu2004: Running ansible-playbook...

PLAY [all] *********************************************************************

TASK [Gathering Facts] *********************************************************

fatal: [ubuntu2004]: UNREACHABLE! => {"changed": false, "msg": "Failed to connect to the host via ssh: Warning: Permanently added '[172.23.112.1]:2222' (ED25519) to the list of known hosts.\r\nsign_and_send_pubkey: no mutual signature supported\r\nvagrant@172.23.112.1: Permission denied (publickey,password).", "unreachable": true}

PLAY RECAP *********************************************************************

ubuntu2004 : ok=0 changed=0 unreachable=1 failed=0 skipped=0 rescued=0 ignored=0

Ansible failed to complete successfully. Any error output should be

visible above. Please fix these errors and try again.

wsl.config

******************

[boot]

systemd=true

# Enable extra metadata options by default

[automount]

enabled = true

root = /mnt/

options = "metadata,umask=77,fmask=11"

mountFsTab = false

ansible.cfg

***************************

[ssh_connection]

ssh_args = -C -o ControlMaster=auto -o ControlPersist=60s

ansible version

***************************

Ansible [core 2.16.6]

config file = /etc/ansible/ansible.cfg

configured module search path = ['/home//.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /home//.local/lib/python3.10/site-packages/ansible

ansible collection location = /home//.ansible/collections:/usr/share/ansible/collections

executable location = /home//.local/bin/ansible

python version = 3.10.12 (main, Nov 20 2023, 15:14:05) [GCC 11.4.0] (/usr/bin/python3)

jinja version = 3.1.3

libyaml = True

Hello Lakerfan,

your error message differs from Toms message. I can’t reproduce it at the moment, since I’m lacking of a Windows machine currently.

My assumption is, that the ansible play just uses wrong credentials since it can reach the container but can’t authenticate using

password or publickey. Can you share your vagrantfile?

Kind regards

Philip

Hello Good Morning,

Thanks for replying to my question.

Had to add

"config.vm.synced_folder ".", "/vagrant", disabled: true"to my Vagrantfile because it would return the following error if not presentvm:* The host path of the shared folder is not supported from WSL. Host

path of the shared folder must be located on a file system with

DrvFs type. Host path: .

Please see the below files.

Vagrantfile:

# -*- mode: ruby -*-

# vi: set ft=ruby :

Vagrant.configure("2") do |config|

config.vm.box = "ubuntu/trusty64"

config.vm.synced_folder ".", "/vagrant", disabled: true

config.ssh.insert_key = false

config.vm.provider "virtualbox" do |vb|

# # Display the VirtualBox GUI when booting the machine

# vb.gui = true

#

# # Customize the amount of memory on the VM:

vb.memory = "4024"

vb.cpus = "2"

end

#

# VM configuration

config.vm.define "ubuntu2024" do |ubuntu2024|

ubuntu2024.vm.hostname = "ansible"

end

config.vm.provision "ansible" do |ansible|

ansible.playbook = "playbook.yml"

end

end

************************************************************************************************

vagrant_ansible_inventory:

ubuntu2024 ansible_ssh_host=172.23.112.1 ansible_ssh_port=2222 ansible_ssh_user='vagrant' ansible_ssh_private_key_file='/mnt/c/Users//.vagrant.d/insecure_private_keys/vagrant.key.ed25519'

I can see, that you changed the Box image from my example. Not sure if there is something regarding SSH connections configured in there. You said, that you can do a

vagrant sshinto the box right? Have you also tried it manually using ssh and the key that is configured in your Ansible inventory?Also, can you doublecheck the existence of the correct public key within the VM for the user

vagrant?Also, have you played around with using

config.ssh.insert_keyset totrueor using your own private keys? See more information here https://developer.hashicorp.com/vagrant/docs/vagrantfile/ssh_settings#config-ssh-insert_keyHello,

It works! Re-created the vagrant file using the generic/image box in this guide.

Wonder what is different between the two builds that would cause the ansible playbook to fail on the trusty image.

Also, tried changing the code -> config.ssh.insert_key to true and it returned the same error.

Guess more troubleshooting/testing is required to get the trusty image to work. At least it works in that build.

Thanks again.